Why Enterprise AI Is Slow

Last Saturday I deployed an AI app to Railway in about ten minutes. I had the idea, grabbed the laptop, credit card, and done. I had a running service before my coffee got cold. It wasn’t anything special, just working with the OpenAI API and a basic Django app. But the experience was great, and it was easy to get something pretty impressive going in no time.

On Monday I started the process of getting a similar setup approved at work. That’ll take about six months. Maybe longer.

“Economically, a startup is best seen not as a way to get rich, but as a way to work faster.” – Paul Graham, The Hardest Lessons for Startups to Learn

Enterprise AI adoption isn’t slow because the technology doesn’t work. It’s slow because the process for getting access to the technology hasn’t changed since we were buying SAP licenses.

The personal stack

On your own time, the menu of tools that can be used is enormous. Railway, Vercel, Cloudflare Pages for hosting. RunPod, fal.ai, Replicate for GPU compute. Spin up a model endpoint, connect it to a frontend, tear it down when you’re done. Five bucks and you can do the whole thing in one evening.

GitHub for version control, actions for CI/CD that just works (most of the time).

Nobody asks you to fill out a use-case form, or approve your architecture diagram. You just build. And generally the UX/DX is very good. The amount of time saved can be huge if you can use the latest and greatest products on the market.

That’s before you even get to AI. All of the above is just basic developer tooling. But when you add AI coding assistants like Claude or Codex into the mix, the gap between what’s possible on your own time and what’s possible at work gets absurd:

“Claude doesn’t make me much faster on the work that I am an expert on. Maybe 15-20% depending on the day. It’s the work that I don’t know how to do and would have to research. Or the grunge work I don’t even want to do. Many of the projects I do with Claude day to day I just wouldn’t have done at all pre-Claude. Infinity% improvement in productivity on those.” – Aaron Boodman

That infinity percent is the part that matters. It’s not about doing the same work faster. It’s about doing work you never would have attempted.

The enterprise stack

Now let’s imagine we’re at work.

We have a locked-down Azure or AWS with most of the useful features turned off by IT policy. No quick-start templates. No one-click deploys. Everything configured for compliance, not speed.

Self-hosted on-prem GitLab, usually a few major versions behind, with none of the CI/CD stuff you’d get on GitHub or GitLab proper.

You spend more time fighting the tools than using them.

And you don’t pick your stack. You pick from a menu of what’s already been approved. The menu was probably written two years ago. It doesn’t include the best options for basics like hosting, let alone anything new in AI. You’re lucky if IT will just let you use ChatGPT.

The procurement gauntlet

Say you find a tool that would actually help. An API, a platform, whatever. Here’s what happens next.

You don’t sign up. You can’t just use a credit card. Oh no. Instead you meet with the vendor’s sales team. You explain your use case. They explain their pricing. A few more calls. Maybe a demo for your team or leadership.

Then you write a business case. Then you get budget approval. This alone can take a quarter if the timing doesn’t line up with planning cycles.

Then the real fun starts. Security review. Data governance review. Legal review of the contract terms. Where does the data flow? Who has access? What happens if the vendor gets breached? IT architecture review to make sure it fits.

Six months is optimistic. And that assumes you had budget in the first place. If you didn’t, add another quarter just for that conversation.

The real cost

The cost should be measured in time, not dollars.

Goldman Sachs reported in March 2026 that they still can’t find a meaningful relationship between AI adoption and productivity at the economy-wide level. But in the cases where teams actually got tools deployed? 30% productivity gains.

The AI works. The bottleneck is everything between “this would help” and “you’re allowed to use it.” Ask anyone at a smaller company if they’d give up their Claude subscription. All that infinity percent upside, stuck in the procurement queue.

That’s the part the analysts keep missing. They look at the macro numbers and wonder why AI isn’t moving the needle. The answer is sitting in procurement queues and security review backlogs at companies where some developer had an idea six months ago and still can’t touch the tool.

Multiply that across every team trying to experiment with AI in a large company. Hundreds of ideas that never get tested. Not because they were bad ideas. Because the cost of trying was too high.

The leadership problem

Here’s where it gets a bit uncomfortable.

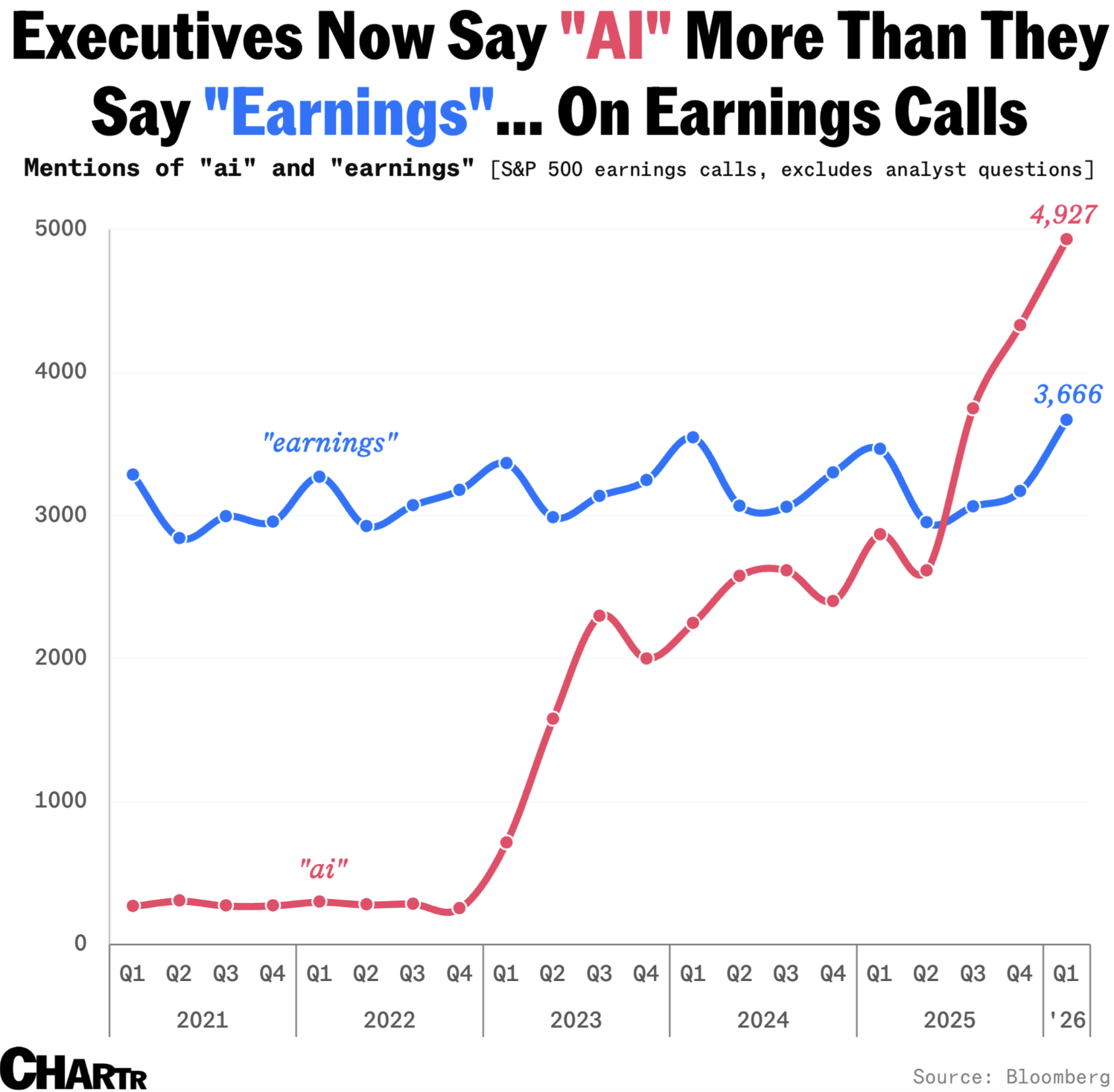

The executives reading those McKinsey reports and setting AI strategy? They’re not the ones experiencing this friction. They announce AI initiatives. They set the OKRs and KPIs. They allocate budget. They give big speeches and then they wonder why nothing’s moving.

Source: Bloomberg via Chartr / r/dataisbeautiful

Source: Bloomberg via Chartr / r/dataisbeautiful

And most of them barely use the tools themselves. 69% of CEOs and senior executives use AI less than an hour per week. 28% don’t use it at all. They’re making decisions about AI adoption without firsthand experience of what it can actually do.

They don’t know their developer spent three weeks waiting on a security review. They don’t know it took the team a month just to get permissions on a service they were already paying for. Or that once they finally got access, it turned out the locked-down version barely works compared to what’s available on the open market. They don’t know the “approved vendor list” hasn’t been updated since before GPT-4 came out.

And this gap is getting wider. The technology moves fast. The procurement process doesn’t move at all.

The execs who’ll do well here are the ones who ask their engineers what’s actually blocking them, and then go fix it. Not the ones who hire a consultancy to write another AI strategy deck.

“Stagnation is slow-motion failure.” – Tobi Lutke, CEO of Shopify

Why the controls exist

But look, the controls aren’t stupid.

When I swipe a credit card for a side project, the blast radius of a security incident is… my data. When someone at a hundred-thousand-person company connects a new service to internal systems, the blast radius is the entire organization. Customer data, employee data, proprietary IP. A different risk calculus entirely.

Procurement processes, security reviews, legal approvals: they exist because bad things happen when they don’t. In 2021, Codecov, a code coverage tool that seemed harmless enough, got compromised and gave attackers access to the CI environments of 29,000 enterprise customers. That’s the kind of thing these processes are designed to prevent. They’re scar tissue.

But the process was designed for buying SAP licenses and Oracle contracts. Multi-year, six-figure deals where three months of evaluation makes total sense. That same process now gets applied to a $200/month API that a developer wants to try for two weeks.

That’s the problem. Not that the controls exist. That there’s only one speed. Mega slow.

What would actually help

I love to complain as much as anyone but I’m also biased towards doing things and trying to come up with solutions. So I’ll give my $0.02.

If the tool doesn’t touch production data, doesn’t connect to internal systems, and costs less than some low threshold, a team lead should be able to approve it in a week. Have credit cards for this. Have a team expedite the request. Whatever it takes.

That’s the whole idea. Risk-appropriate governance instead of one-size-fits-all governance.

But it requires leadership that understands the difference between a $200/month experiment and a million-dollar platform decision. And right now, most large companies treat them exactly the same way. And they pay for it with time, the one thing you can’t earn back.